tranCendenZ

2[H]4U

- Joined

- Jun 6, 2004

- Messages

- 3,844

Shemazar said:Something just doesn't seem right with the T-Break review in regards to the X800XT benches.

The T-Break review is irrelevant now that the SM3.0 patch is out

etc

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

Shemazar said:Something just doesn't seem right with the T-Break review in regards to the X800XT benches.

jimpo said:Besides, X800XT on that anand review IS NOT X800XT-PE. It is just X800XT. XT-PE will be even faster. See[/url]

tranCendenZ said:The old non-sm3.0 benchmarks are irrelevant now

Final Words

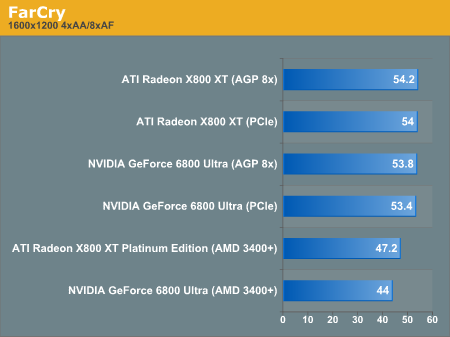

Both of our custom benchmarks show ATI cards leading without anisotropic filtering and antialiasing enabled, with NVIDIA taking over when the options are enabled. We didn't see much improvement from the new SM3.0 path in our benchmarks either. Of course, it just so happened that we chose a level that didn't really benefit from the new features the first time we recorded a demo. And, with the mangoriver benchmark, we were looking for a level to benchmark that didn't follow the style of benchmarks that NVIDIA provided us with in order to add perspective.

Even some of the benchmarks with which NVIDIA supplied us showed that the new rendering path in FarCry isn't a magic bullet that increases performance across the board through the entire game.

Image quality of both SM2.0 paths are on par with eachother, and the SM3.0 path on NVIDIA hardware shows negligable differences. The very slight variations are most likely just small fluctuations between the mathematical output of a single pass and a multipass lighting shader. The difference is honestly so tiny that you can't call either rendering lower quality from a visual standpoint. We will still try to learn what exactly causes the differences we noticed from CryTek.

The main point that the performance numbers make is not that SM3.0 has a speed advantage over SM2.0 (as even the opposite may be true), but that single pass per-pixel lighting models can significantly reduce the impact of adding an ever increasing number of lights to a scene.

It remains to be seen whether or not SM3.0 offer a significant reduction in complexity for developers attempting to implement this advanced functionality in their engines, as that will be where the battle surrounding SM3.0 will be won or lost.

Shemazar said:Are they? Maybe you didn't read the full article at anandtech...

nweibley said:My God! You don't say! Are you telling me that a plain-jane GT beat the XT-PE when the GT got to use a essentially a proprietary path, and the XT-PE had no such luck.

Are you telling me that a plain-jane GT

Kingofl337 said:Plain jane cost $500.00 Not that the ATI cards are cheap. But damn thats alot of green.

nweibley said:My God! You don't say! Are you telling me that a plain-jane GT beat the XT-PE when the GT got to use a essentially a proprietary path, and the XT-PE had no such luck.

Indeed. But, it is Cryteks oddball decision to alienate ATI by not disclosing ANY plans to implement 3Dc, which has been praised for its simplicity and ease of implementation. I find it odd, considering a very large segment of their audience. It simply seems odd that Crytek would take such odd actions. I can only speculate about why they chose not to implement 3Dc (at least yet) but that is nothing but speculation.tranCendenZ said:It was ATI's choice not to support SM3.0, don't blame Nvidia or Crytek for ATI's decision. Looks like they underestimated Nvidia

P.S. - the SM3.0 path won't be proprietary when next years ATI and Nvidia cards because it is the future Shader Model standard that will be in all games.

chrisf6969 said:SM3.0 is not proprietary.... It will be part of DX9.0C and ATI will have it in their next generation of cards. They will also have to add 32 bit precision.

AND I will bet when ATI adds 32 bit (instead of 24bit) their next gen of cards will lose a lot of performance.

Considering how much more work Nvidia is doing by using 32 bit precision its amazing they can keep up, much less beat a much faster clocked GPU by ATI.

I do not disagree, but isnt the point of releasing each generation to improve upon the last. Everyone is readily admitting the FX line had its shortcomings that nVidia needed to address, and they did. They also made a faster better card than the FX. ATI on the other hand had something real good going with the 9800s. Good IQ, good performance, etc. They made the decision that the 9800's arch. would be sufficient for their next generation of cards/games, and so they improved its performance too, and added some features to it, but did not redesign it.Bad_Boy said:i think the fx series was rushed, and the 6800 has really been thought out. i mean if you think about it, the 6800 fixed everything the FX had problems with, and some. thats just my opinon on it.

http://www.crytek.com/screenshots/index.php?sx=polybump&px=poly_02.jpgMerlin45 said:just a question, how much are normal maps used in far cry, because if they aren't used very heavily then 3dc won't add anything.

pro·pri·e·tar·y ( P ) Pronunciation Key (pr-pr-tr)nweibley said:And, at this point in time, the SM3.0 basically is a proprietary path. Who else besides nVidia is using it yet?

Ok, if you can find it in your heart, forgive me. nVidia is the EXCLUSIVE user os SM3.0 right now. Better?chrisf6969 said:pro·pri·e·tar·y ( P ) Pronunciation Key (pr-pr-tr)

adj.

Exclusively owned; private: a proprietary hospital.

Owned by a private individual or corporation under a trademark or patent: a proprietary drug.

Nvidia does not own SM3.0, and not only can it be used by anyone, IT WILL BE USED by ATI in their next generation. IT IS THE FUTURE CODE ALL VIDEO CARDS / GAMES WILL BE IN THE NEXT YEAR(approx.) or so.

Calling SM3.0 proprietary and useless, is like calling DX8.0 proprietary & useless back when it first came out and DX7 was the standard. DX8.0 provided a huge leap forward... as should SM3.0 and DX9.0C (shoulda been called DX10)

SM3.0 is proven faster and more efficient. Real programming requires conditions and branching. Carmack (god) has been asking for 2 things: - better programability on GPU's (SM3.0) and FULL precision (32-bit) (6800 is what he asked for, ATI wont have it until the next gen)

Basically, the future is now for Nv, where we still have to wait for ATI to bring the future.

Granted their is very little difference in 24 & 32 currently bu 32 bit will ultimitely lead to much more realistic graphics.

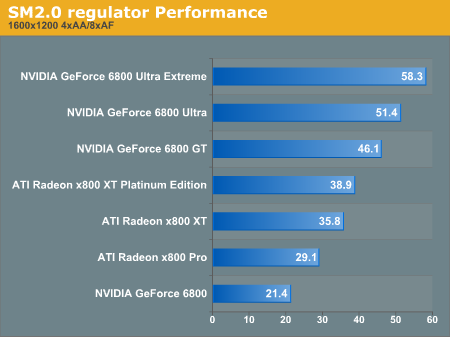

Vagrant Zero said:OH REALLY? ANANDTECH YOU SAY? The same Anandtech that shows a GT beating an XTPE pro in SM2 [and SM3] at 1600x1200 4x8x?

nweibley said:Ok, if you can find it in your heart, forgive me. nVidia is the EXCLUSIVE user os SM3.0 right now. Better?

There is very little difference in 24 and 32 bit besides performance. Why waste performance on something that seems so utterly useless at this point in time? Maybe the 6800s will last untill we see the effects of 32 bit precision... but I doubt it.

True, I am running a Ti 4200 and I can play farcry on medium everything, granted it doesn't look flash and I get basically 35fps.chrisf6969 said:Forgiven, I know the 6800 will last long enough to be useful with SM3.0 b/c thats happening now already with the FarCry patch 1.2. For IQ differences using 24 vs 32 it might, it might not. But look at how well the GF4ti4x00's are holding up considering their age.

nweibley said:nVidia is the EXCLUSIVE user os SM3.0 right now. Better?

Lezmaka said:

Myrdhinn said:The one thing I don't get is that Microsoft and anyone else down have said SM 3.0 won't increase the performance of a vid card but only makes coding shaders easier and adds some functions 2.0 doesn't have. So in that case, where is NV getting this huge performance leap from? Like c'mon, 20-30% increases when even the originator of 3.0 says it doesn't increase card performance.

Myrdhinn said:The one thing I don't get is that Microsoft and anyone else down have said SM 3.0 won't increase the performance of a vid card but only makes coding shaders easier and adds some functions 2.0 doesn't have. So in that case, where is NV getting this huge performance leap from? Like c'mon, 20-30% increases when even the originator of 3.0 says it doesn't increase card performance.

Hey, everyone on [H]Forum using a 3Dlabs card (especially for gaming), please speak up. I want to count all of you.Lezmaka said:

nweibley said:Hey, everyone on [H]Forum using a 3Dlabs card (especially for gaming), please speak up. I want to count all of you.

oqvist said:Listen nVidia releases 10 beta drivers and 1 whql.

ATI releases 1 whql 1 whql beta and 1 whql it´s a slightly difference.

Generally people benchmark with whql certified drivers for ATI and beta drivers for nVidia.

It´s not okay

InkSpot said:Nvidia never releases beta drivers, all those drivers floating around the net are nvidia development drivers leaked by board partners and whatnot............ Atleast i can't find any Beta drivers on nvidias site..